AI Use Is Surging in Schools But Where Are the Safeguards?

- Ben Duggan

- Oct 22, 2025

- 3 min read

Updated: Jan 5

By Ben | Taught AI Academy

AI has officially entered the classroom. In 2025, the majority of UK teachers now say they’re using AI tools regularly—whether for planning, differentiation, admin, or communication. And confidence is growing fast: 87% of educators now say they feel either completely or somewhat comfortable using AI at work.

But here’s the concern: the policies, guidance, and safeguarding protections that should sit alongside AI use are either missing—or unknown.

And the data is alarming.

What the Numbers Tell Us

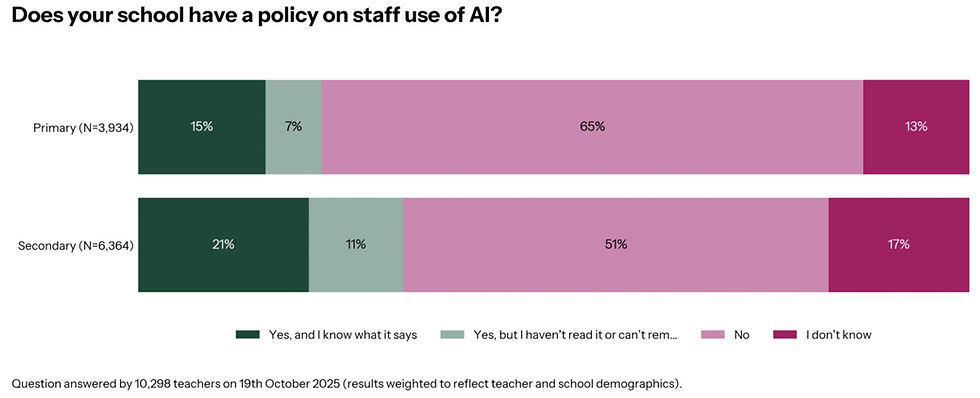

A recent national survey asked over 10,000 teachers whether their school has a policy on staff AI use.

Here’s what they said:

Only 15% of primary and 21% of secondary teachers say their school has an AI policy and they know what it says.

65% of primary and 51% of secondary teachers say their school has no AI policy at all.

13% (primary) and 17% (secondary) simply don’t know if a policy exists.

Even among those who say their school has a policy, many admit they haven’t read it or can’t remember what it says.

A Growing Safeguarding Risk

This policy vacuum poses a serious safeguarding risk. AI tools are now being used to write parent emails, generate feedback, create lesson content—and yes, sometimes with real pupil data entered into the prompt.

Without clear, enforced guardrails, there’s nothing stopping:

Sensitive information being shared with public models

Pupil names being entered into unsecured AI systems

Staff unknowingly breaching GDPR or safeguarding protocols

Conflicting messages about what “safe use” actually looks like

Let’s be blunt: if 1 in 6 teachers doesn’t know whether a policy even exists, the chances of misuse—unintentional or not—are already high.

Teachers Are Not “AI Shy.” They’re Just Undersupported

Interestingly, the survey also revealed that teachers are not scared of AI—they’re open to it. In fact, 87% describe themselves as “completely” or “somewhat” comfortable using AI in their work.

Confidence also rises significantly when staff have read and understood their school’s AI policy:

74% of teachers who had read their school’s AI policy felt “completely comfortable” discussing AI with colleagues.

This drops to just 53% among those who don’t know whether a policy exists.

This shows the power of clear guidance: teachers don’t need pushing—they need supporting.

What Schools Should Do Now

This is a moment of both urgency and opportunity. Schools must act—before policy gaps become safeguarding incidents. Here’s what I recommend:

1. Write a Clear, Staff-Facing AI Policy

Cover safeguarding, GDPR, usage boundaries, age-specific rules, and clear do/don’t guidance.

2. Deliver CPD on Safe Prompting & Use

Teachers should know how to use AI tools in a way that’s aligned with school values, inclusive practice, and legal responsibilities.

3. Audit Current AI Usage in Your School

Find out what tools staff are using, how they’re using them, and where the risks (or benefits) lie.

4. Appoint an AI Lead or Working Group

Every school needs a go-to team to review tools, test prompts, support staff, and update policy regularly.

Final Thought: AI Isn’t the Problem. Silence Is.

Teachers are embracing AI. But we’re sending them into this new world without a map.

If schools want to keep children safe, support staff, and use AI meaningfully, they must stop treating AI as a future issue. It’s already here. What’s missing is clear leadership, transparent policy, and proper training.

Because safeguarding isn’t optional. And in the AI age, silence is a risk we can’t afford.

Comments